A Glimpse Into the AI-Powered Future

AI is no longer a concept of the future, it’s reshaping our lives now.

From Meta’s smart glasses redefining how we think, to YouTube’s AI quietly gauging our age, and the NHS slashing wait times with virtual physio, one thing is clear: AI is becoming the new frontline of interaction, safety, and care.

Artificial intelligence is no longer just a background tool; it is becoming an active decision maker in how we live, work, and experience the world.

Mark Zuckerberg’s push for AI-powered glasses reimagines human computer interaction, turning wearables into gateways for cognitive enhancement. Google’s move to predict user age with AI signals a future where digital platforms rely less on what we declare and more on how we behave, reshaping online safety and accountability. Meanwhile, the NHS trial of Flok Health’s physiotherapy app illustrates AI’s tangible impact in healthcare, where efficiency and accessibility can mean faster recoveries and reduced pressure on human clinicians.

But with its rise comes a challenge, making sure the speed of innovation doesn’t outpace trust, access, or human oversight. The next chapter isn’t just about smarter systems, it’s about smarter choices in how we use them.

Mark Zuckerberg’s Bold Claim: No AI Glasses? Prepare to Fall Behind

Meta CEO Mark Zuckerberg is placing a high stakes bet on the future of human-computer interaction and it’s happening right on your face.

During Meta’s Q2 2025 earnings call and in a freshly published blog post on the rise of “superintelligence,” Zuckerberg made it clear: smart glasses powered by artificial intelligence aren’t just a cool gadget, they’re the next evolutionary leap. In his view, anyone without them may soon be left behind mentally.

“If you’re not wearing AI glasses in the next few years,” he warned, “you could be at a serious cognitive disadvantage.” For Zuckerberg, these glasses aren’t just about convenience, they’re as essential as contact lenses for clear vision.

He likened them to contact lenses, invisible but indispensable. “Once you get used to them, functioning without them will feel like thinking with the lights off.”

Meta’s Reality Labs is doubling down on this frontier, fusing AI with wearables in a push to rewire how we think, learn, and interact with the digital world. The company’s long-term play? To make AI an ambient, always on co-pilot with glasses as the gateway.

The message is clear: in Meta’s future, intelligence enhancement won’t just come from what you know, but what you wear.

From Novelty to Necessity

While smart glasses were once dismissed as futuristic novelties, Meta sees them as essential tools in the age of ambient intelligence, a future in which AI isn’t summoned but surrounds us, seamlessly integrated into everyday life.

Reality Labs, Meta’s research division focused on extended reality (XR), is at the center of this push. Over the past several years, the lab has invested billions into developing the hardware and software needed to merge AI with augmented reality. The goal is clear: to create devices that can understand your surroundings, respond in real time, and act as a personal assistant that’s always present, yet unobtrusive.

Zuckerberg believes this shift is inevitable. As generative AI becomes more advanced and personalized, users will demand faster, more natural ways to engage with it. Typing, swiping, and tapping may soon feel clunky and inefficient by comparison.

“The next logical step in our digital evolution is wearability,” Zuckerberg said. “We’re building tools that don’t just live in your pocket, they live with you, adapt to you, and think alongside you.”

Reimagining Human-AI Collaboration

Imagine walking down the street and having real-time language translation whispered into your ear. Or receiving instant visual explanations for complex objects around you. Or being coached through a task by AI that understands your context, location, and intent, all without ever pulling out your phone.

That’s the vision Meta is building toward: AI that is not just accessible, but ambient, a constant companion that enhances cognition, supports productivity, and even shapes memory recall and decision-making.

The implications are far reaching. For education, it means personalized learning delivered through real world interaction. For work, it could mean intelligent task management without screens. For healthcare, AI could provide real time alerts, diagnostics, or accessibility tools for patients with cognitive or sensory impairments.

But for all its promise, this evolution also raises critical questions about privacy, ethical AI design, data usage, and the psychological impact of outsourcing certain types of thinking to machines.

The Race for the AI Wearables Market

Meta isn’t alone in this race. Apple, Google, and Samsung have all invested heavily in next gen wearables. But Meta has a head start in pairing AI with AR, thanks to its work on products like Ray-Ban Meta Smart Glasses and the Quest headset lineup. These devices already feature AI-powered camera, voice, and interaction capabilities, and the next iterations promise even deeper integration.

Still, public adoption will be the ultimate test. Many consumers are wary of wearables that can record, track, and analyze personal data. Concerns over surveillance, cognitive overload, and the social implications of always-on technology remain very real. Zuckerberg acknowledges that trust and transparency will be vital.

“We’re not just building hardware,” he said. “We’re building relationships between people and machines, and that needs to be done carefully.”

I’m Mark Zukerberg’s vision, AI glasses won’t just help you see better, they will help you think better.

YouTube’s Watching Closer: Google to Use AI to verify User Age on YouTube.

Think you can outsmart YouTube with a fake birthdate? Not for long.

Google is stepping up its efforts to protect younger users online and this time, it’s putting artificial intelligence in charge.

Beginning August 13, the tech giant will launch a major pilot in the United States that uses advanced AI to estimate users’ ages on YouTube, not by what they claim, but by how they behave. This new system, designed to enhance child safety on one of the world’s most heavily used video platforms, will analyze users’ viewing patterns, interaction habits, and activity history to determine whether someone is likely under or over 18.

The aim?

To tighten control over age-restricted content and prevent minors from accessing material that isn’t appropriate for their age, something that’s been notoriously difficult to enforce through self reporting alone.

“For years, users could simply bypass age gates by entering a fake birthdate,” a Google spokesperson said. “But that loophole no longer aligns with today’s safety standards, especially in an era where generative AI and behavioral data can provide smarter safeguards.”

How It Works: Behavior Over Birthdates

The new system uses machine learning models trained to identify digital behavior typically associated with different age groups. For instance, an account that frequently engages with animated content, avoids mature topics, or has limited interaction history may raise flags that suggest the user is younger than 18, regardless of what age was entered at sign up.

Conversely, an account consistently engaging with more mature content, subscribing to adult centric channels, or showing usage patterns typical of adult users may be flagged as eligible for unrestricted access.

While the exact algorithms and decision thresholds remain proprietary, the system is built to be adaptive, learning from large sets of anonymized user data and continuously refining its accuracy. It won’t rely on a single video or interaction but rather a pattern of behavior over time.

Critically, if the AI flags an account as potentially underage, that account may see tighter restrictions on videos involving mature themes, sensitive subjects, or monetized content with age gating. YouTube says it will also explore ways to prompt users for additional verification when age predictions and user declarations don’t align.

Why Now? A Changing Regulatory Landscape

This rollout comes amid growing global pressure on tech companies to do more to protect young users. In recent years, governments across the U.S., U.K., and EU have introduced or strengthened child safety regulations, including the UK’s Age Appropriate Design Code (commonly known as the “Children’s Code”) and pending U.S. legislation focused on children’s online safety.

Platforms like TikTok, Instagram, and Snapchat have already begun experimenting with AI-based age estimation tools using facial analysis or engagement behavior. Google’s approach with YouTube, however, leans heavily on behavioral analysis, a method the company believes offers a balance between safety, user privacy, and seamless user experience.

“This move is part of a larger strategy to make YouTube not only smarter but safer,” said one industry analyst. “Google is betting that behavioral AI can outperform traditional methods of age verification, and potentially avoid the friction of invasive identity checks.”

Concerns, Safeguards, and the Path Ahead

As with any AI deployment, the new system raises critical questions about accuracy, bias, and transparency. Could the model wrongly flag adult users as underage? Will content recommendations become less relevant if users are misclassified?

Google has acknowledged these concerns and stated that any decision to restrict content will be accompanied by clear feedback and appeal mechanisms. Users will be able to correct their age through traditional verification methods if needed.

The company also emphasized that no facial recognition or biometric data is being used. All age estimation will be based solely on in-app behavior and usage patterns, and the system will comply with GDPR, COPPA, and other major data protection frameworks.

Still, advocacy groups have cautioned that the approach must be rolled out responsibly. “Using AI to estimate age is promising, but it must be accurate, fair, and transparent,” said a spokesperson for the Center for Digital Democracy. “We also need to ensure that it doesn’t lead to overblocking or discriminatory experiences for users from different backgrounds.”

The Bigger Picture: AI as Digital Gatekeeper

This development reflects a broader shift across the tech industry: the growing role of AI not just as a content recommender or search assistant, but as a gatekeeper, quietly determining what users can see, do, or access.

For YouTube, this move is another step in reengineering trust with parents, educators, and regulators, and a test case for how far AI can (and should) go in moderating human behavior online.

The bottom line? YouTube is no longer just listening to what you say. It’s watching how you act, and it’s using that data to decide if you’re really old enough to be there.

As digital experiences become more personalized, predictive, and protected, Google’s AI age estimation system could set the tone for how other platforms handle identity and accountability in the years ahead.

AI Driven Physiotherapy App Cuts NHS Back Pain Waiting Lists by Over Half

A groundbreaking AI-powered physiotherapy app has significantly reduced waiting times for back pain treatment in an NHS pilot, slashing queues by 55% and freeing up thousands of clinician hours.

The three-month trial, led by Cambridgeshire Community Services NHS Trust and involving over 2,500 patients, tested the Flok Health app, a first of its kind digital physiotherapy platform regulated by the Care Quality Commission.

Using artificial intelligence, the app triages, treats, and discharges patients suffering from musculoskeletal (MSK) conditions, particularly lower back pain, a condition that accounts for nearly a third of the trust’s caseload. The digital programme has already saved approximately 2,500 hours of clinician time, redirecting resources toward more complex and urgent cases.

Jayne Davies, Clinical Lead for MSK Services at the trust, called the technology a potential “game changer” for the NHS.

“We simply can’t train or hire enough staff to meet the rising demand,” she said. “If rolled out thoughtfully, this could transform how we deliver care.”

Fast, Flexible, and Effective Care

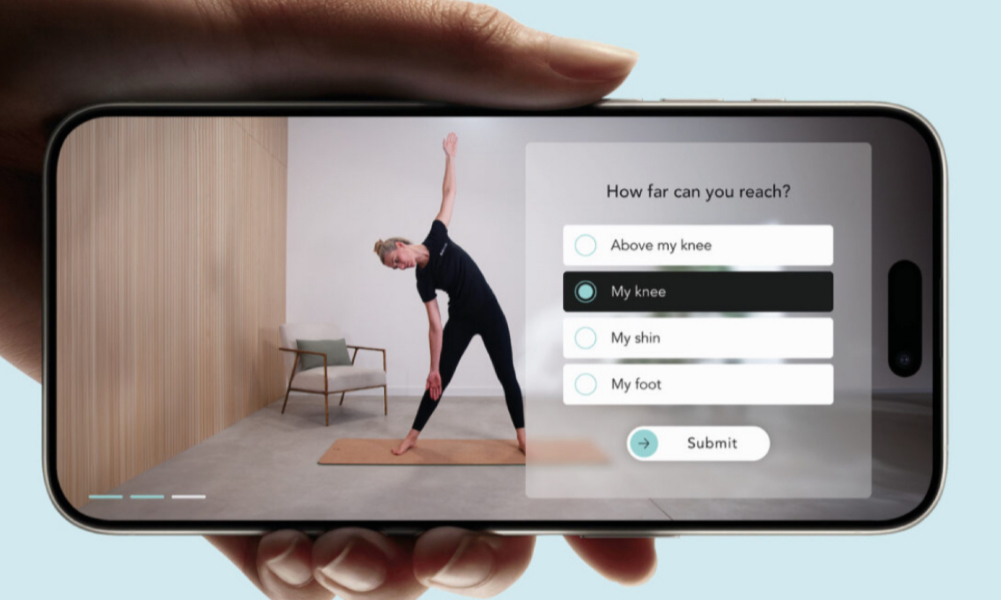

Patients using the app undergo a personalised assessment via AI, which tailors a treatment plan featuring professionally guided exercises demonstrated on-screen by experienced NHS physiotherapist Kirsty Henderson.

The response from patients was overwhelmingly positive:

80% found the app equal to or better than in-person treatment

98% were fully treated and discharged digitally.

Waiting times dropped from over 18 weeks to under 10 weeks.

Clinician time saved:856 hours per month

Sharon McMahon, a teacher from Cambridgeshire, had been waiting 17 weeks for treatment before accessing the app. “I started the same day,” she said. “The flexibility and guidance helped me recover in just two weeks.”

Annys Bossom, who has dealt with chronic back pain for 25 years, was initially skeptical. “At first I was disappointed to be offered an app, but it was so intuitive,and the exercises were unlike anything I’d done before. It worked.”

A Complement to Human Care, Not a Replacement

Despite concerns around AI in healthcare, clinicians emphasize that the app enhances, rather than replaces, traditional care.

“This won’t put physiotherapists out of work,” said Ms. Henderson. “It gives us more time to focus on patients who truly need face to face care.”

The Chartered Society of Physiotherapy acknowledged the urgency.

“There’s a crisis in physiotherapy,” said Ash James, the society’s director of practice and development. “When designed safely and with proper oversight, technology like this can be a vital tool for easing pressure on the NHS.”

Co-founder Finn Stevenson, a former professional rower, said his experience with long treatment delays inspired the platform.

“There simply aren’t enough clinicians. This app offers immediate, high-quality support, like having a video call with a physiotherapist whenever you need it.”

The Future of Digital Health?

MSK disorders remain one of the UK’s top causes of disability and work absence, with back pain affecting 80% of adults at some point in their lives. Yet skepticism around AI in healthcare persists. According to The Health Foundation, one in six people in the UK worry that AI may worsen care quality. Experts agree that building public trust and ensuring robust regulation is essential.

Flok Health has built safeguards into the system, confusing or concerning responses from patients are flagged for direct clinician review, and patients can message physiotherapists for support throughout.

Ms. Henderson added: “It’s still critical we’re here to intervene when something more serious is suspected. But for many, this is safe, effective care, on their terms.”

As digital health solutions evolve, Flok’s trial offers a glimpse into a future where smart technology lightens the NHS’s load, without compromising quality or care.