Building Smarter Futures: Where Intelligence Meets Intent

In a week defined by technological ambition and moral recalibration, three stories reveal the delicate dance between progress and responsibility. Thinking Machines Lab’s $2 billion raise signals a turning point in the AI race—where safety is no longer optional, but foundational. Across the globe, Nvidia’s CEO Jensen Huang applauds China’s AI ascent, reminding us that innovation thrives through collaboration, not isolation. And in labs far from the spotlight, the NeuroWeave breakthrough blurs the line between brain and machine, offering both a lifeline to those battling neurodegenerative diseases and a blueprint for the next generation of human-inspired AI.

Together, these moments sketch a new reality—one where intelligence, whether artificial or biological, demands empathy, precision, and shared purpose. The future isn’t being built in silos; it’s being co-written across borders, disciplines, and neural networks. And the world is watching.

Thinking Machines Lab Secures $2 Billion to Pioneer Safer AI Frontier

In one of the most significant funding rounds of 2025, Thinking Machines Lab, a U.S.-based AI research company, has raised $2 billion to scale its mission of developing safe, transparent, and controllable artificial intelligence systems.

Led by a coalition of investors including Sequoia Capital, OpenAI Startup Fund, Altimeter Capital, and sovereign wealth funds from the Middle East and Southeast Asia, the round signals a strategic shift in how the tech world views the AI arms race—not just faster, but safer.

A New Chapter in AI Responsibility

Founded by former DeepMind and MIT researchers, Thinking Machines Lab has long positioned itself as an alternative to Big Tech AI labs, advocating for alignment-first approaches to AI development. Rather than focusing solely on scale and commercial applications, the lab prioritizes building AI models that can explain their decisions, follow human intent, and reject harmful commands.

“AI safety can’t be an afterthought—it has to be engineered into the DNA of these systems,” said CEO Dr. Maya Trask, during the announcement in San Francisco. “This funding gives us the capacity to scale our research, attract global talent, and create new frameworks for testing and verifying advanced AI systems before they are deployed in the wild.”

Global Attention on Safety, Not Just Speed

This investment comes amid growing scrutiny of AI’s risks, especially in light of recent incidents involving deepfakes, misinformation campaigns, and runaway model behavior. Governments from the U.S., EU, and China have all pushed for increased regulation, and consumer trust in AI remains fragile.

The $2 billion will be used to build out a state-of-the-art AI safety campus, expand their open-source model interpretability tools, and launch a global partnership program to collaborate with universities, policymakers, and civil society organizations.

Investor Confidence in Alignment-Focused Research

According to insiders, Sequoia’s participation marks a bet not just on the future of AI, but on the need for governance-ready models that can thrive in increasingly regulated global markets.

“Thinking Machines is years ahead in thinking through how AI can be both powerful and principled,” said Altimeter Capital partner Bradley Nguyen. “We believe their commitment to safety will set the industry standard.”

Competition Heats Up in the ‘Safe AI’ Space

The move places Thinking Machines Lab in direct philosophical and technical competition with other major players such as Anthropic, OpenAI, and Google’s DeepMind, all of whom are ramping up investment in constitutional AI and reinforcement learning from human feedback (RLHF).

However, analysts say Thinking Machines’ independence and sole focus on safety—as opposed to commercial deployment—may allow it to attract top researchers disillusioned with profit-driven labs.

What Comes Next?

With this raise, Thinking Machines Lab plans to release a public benchmark suite for evaluating AI safety and controllability, along with the beta launch of their aligned AI architecture, TML-Core, by Q4 2025.

Their roadmap also includes multi-lingual alignment research, AI ethics simulation labs, and a new division focused on AI in governance and diplomacy.

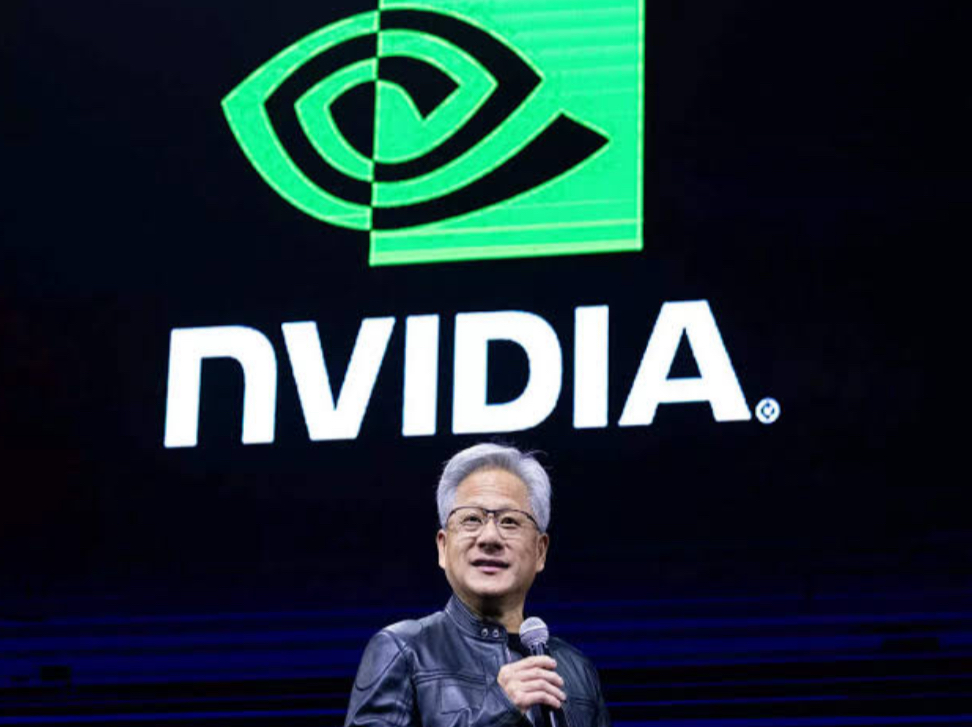

Nvidia CEO Praises China’s AI Momentum as a “Catalyst for Progress” at Beijing Tech Expo

In a rare appearance on Chinese soil, Nvidia CEO Jensen Huang delivered an emphatic endorsement of China’s growing influence in artificial intelligence, calling it a “catalyst for global progress” during his keynote speech at the Beijing Global Tech Expo 2025.

Huang, whose company is at the forefront of AI chip innovation, addressed a packed auditorium of researchers, policymakers, and industry leaders, emphasizing that “the future of AI will be shaped by the contributions of many nations—and China is playing a central role in that vision.” His remarks were met with resounding applause, underscoring the country’s swelling national pride in its AI advancements despite persistent geopolitical tensions.

The speech came amid a complex landscape. While Nvidia faces export restrictions imposed by the U.S. government on advanced AI chips destined for Chinese firms, the company continues to find creative ways to engage with China’s rapidly growing AI ecosystem. Huang’s comments appeared carefully balanced—celebrating Chinese innovation without directly confronting Washington’s regulatory stance.

China, home to giants like Baidu, Alibaba, Tencent, and SenseTime, has been investing heavily in generative AI, robotics, and smart infrastructure. At the expo, domestic firms showcased breakthroughs in real-time language translation, autonomous vehicle systems, and AI-driven medical diagnostics. Huang acknowledged these efforts, saying, “The work being done here—at both the institutional and commercial levels—is not just impressive, it’s essential. We’re witnessing innovation at a scale that will benefit the entire planet.”

His visit also included meetings with leaders from the Chinese Academy of Sciences and Tsinghua University, where he discussed potential academic collaborations and the importance of cross-border research on AI safety, compute efficiency, and environmental sustainability.

Notably, Nvidia’s presence at the expo also signaled that, despite trade barriers, there remains mutual interest in cooperation—particularly in areas such as AI model optimization, training tools, and green computing.

The speech has sparked global conversation. Some analysts interpret Huang’s remarks as a diplomatic nod to one of Nvidia’s most critical markets, while others see it as a reflection of the company’s realpolitik approach to staying influential in all AI hubs, regardless of national origin.

Whatever the case, Huang’s message was clear: innovation cannot flourish in silos. And as AI continues to reshape the future of work, society, and power, the CEO of the world’s most valuable chipmaker believes China’s role is not just important—it’s indispensable.

Breakthrough Neurotech Promises New Frontiers in Brain Disease Research and AI Itself

In a stunning intersection of neuroscience and artificial intelligence, a team of international researchers has unveiled a revolutionary technology that could transform how we understand, treat, and simulate the human brain. The newly developed platform—called NeuroWeave—has been described as a “living interface” capable of mapping brain activity at an unprecedented level of detail and speed.

Developed through a collaboration between MIT, the University of Zurich, and Tokyo Medical Robotics Institute, NeuroWeave combines nanoscale sensors with real-time neural modeling software. It allows scientists to track and decode brain signaling in real time, offering insights into complex neurological disorders like Alzheimer’s, Parkinson’s, ALS, and other degenerative diseases that have long eluded effective treatment.

But what’s grabbing global headlines isn’t just the medical potential—it’s the system’s implications for AI development. The NeuroWeave platform doesn’t just observe brain behavior; it translates biological cognition into dynamic computational blueprints, creating a new frontier in what’s now being called “organic AI modeling.”

According to lead researcher Dr. Leila Sakamoto, the goal is to bridge the gap between human brain mechanics and machine learning frameworks. “We’re not just learning about the brain—we’re learning how to replicate its problem-solving methods. This could lay the foundation for emotionally intelligent and context-aware AI systems that go far beyond current models,” she said at the project’s unveiling in Zurich.

Unlike traditional brain-computer interfaces, which often rely on invasive implants or generalized MRI scans, NeuroWeave uses biocompatible fiber threads to record individual neuron clusters without damaging surrounding tissue. That fidelity has already led to early breakthroughs in identifying the progression patterns of Alzheimer’s and the neurological triggers behind sudden cognitive decline.

Tech companies are taking notice. Sources close to the project say that OpenAI, DeepMind, and Neuralink have all expressed interest in licensing elements of the technology for AI training purposes. By mimicking how real neurons fire and adapt, developers believe they can create machine learning systems that are not only more efficient—but also more aligned with human reasoning.

While ethical concerns remain, particularly around neural data privacy and potential misuse, the research team emphasized their commitment to open science and global medical equity. They have pledged to make the medical aspects of the platform freely available to research institutions in low-income countries.